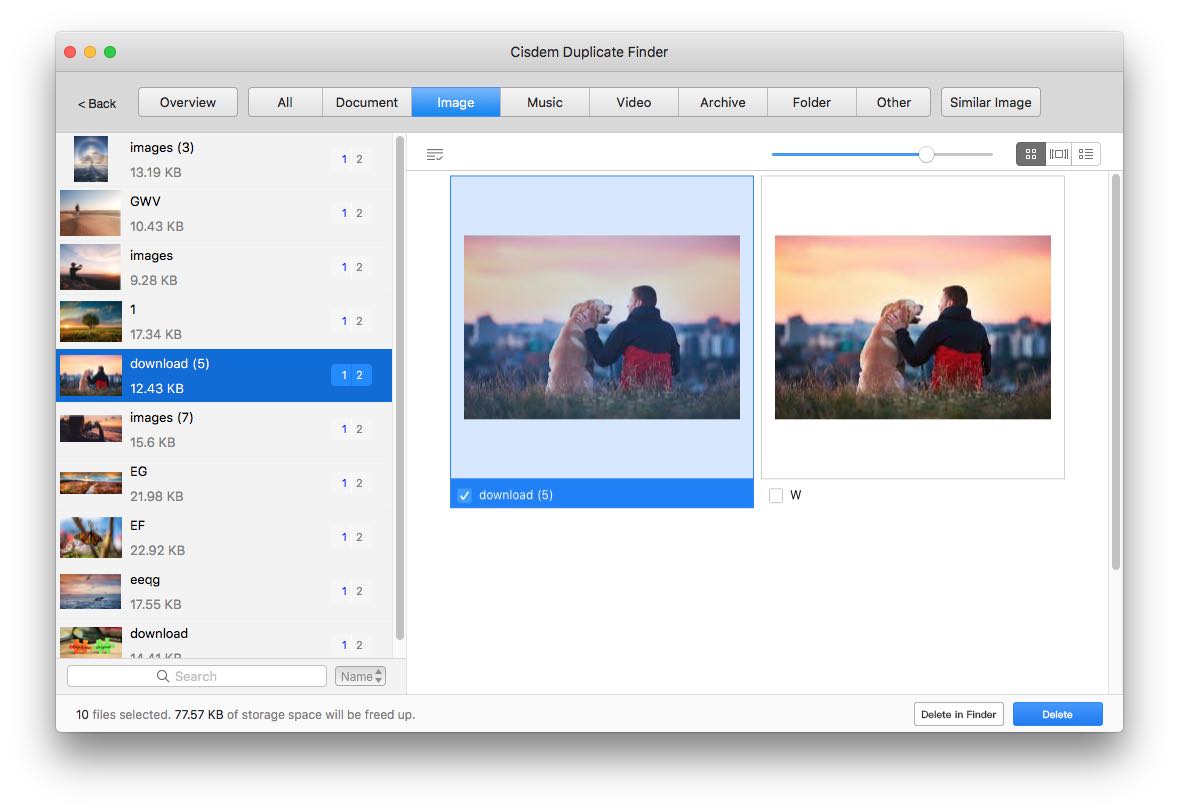

I don't get different results comparing 4.0.4 and 4.1.0. Would you mind filing a bug report on the github issue tracker if you can provide more information? The matching algorithm has not changed so I don't see why you get "worse" results. apart from using exclusion filters (regex) perhaps.Ĥ.1.0 is not released yet, but it is the same code as the current master branch. Ratings/Reviews Overall 0.0 / 5 ease 0.0 / 5 features 0.0 / 5 design 0.0 / 5 support 0.0 / 5 This software hasnt been reviewed yet. It also works with picture files implementing a similar fuzzy algorithm to locate images that may not be the same but are relatively close. 4.0.4 won't work anymore due to the newer python version used in Arch Linux. As its name suggests, dupeGuru Picture Edition is all about finding doubles in your image folders. Description Detect duplicate files in different formats on your computer and remove them. Granted a patch could be written to make it work, I don't think it's worth the trouble at this point, as 4.1.0 is about to get a proper release these are dependencies of the python-sphinx package used for building the documentation. It works with PNG, JPG, GIF, TIFF and BMP files. Pictures can be one of the top sources of duplicates for many people, especially as we migrate to.

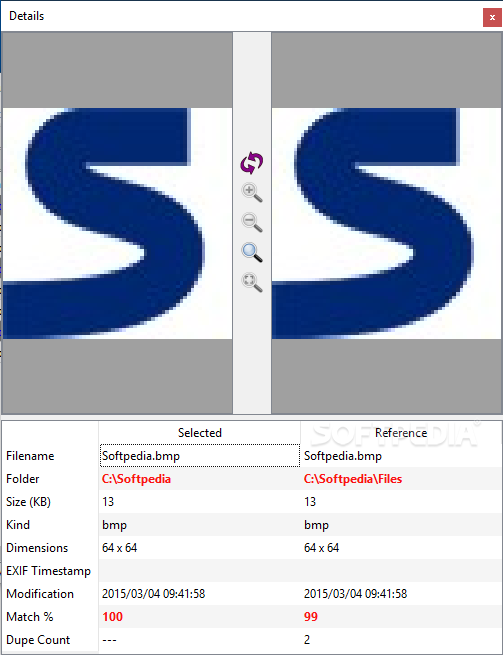

dupeGuru PE is a big brother of dupeGuru. This program works with a search algorithm which has been programmed to detect duplicate images, even if they have different extension, size or name. dupeGuru Picture Edition (PE for short) is a tool to find duplicate pictures on your computer. My take is that they are not really our dependencies, and since they are only used during the building stage, they will end up as orphans. Compare the best dupeGuru alternatives in 2022. This is normal behaviour, you can safely remove these packages. Explore user reviews, ratings, and pricing of alternatives and competitors to dupeGuru. The fast search algorithm find duplicates of any file type, e.g., text, pictures, music or movies. The powerful search engine enables you to find duplicates with a combination of the following criteria. and looking for more precise checksuming and image comparing algorithms.Using makepkg -sr to build packages should automatically remove makedepends once they are no longer needed. Here is nearly identical question: Finding Duplicate image files but i'm already done with the answer (md5). use some similarity detection for finding duplicates based on resize and foto enhancement - no idea how to do.Īny idea, help, any (software/algorithm) hint how to make order in the chaos?.make checksum of pure image data (or extract histogram - same images should have the same histogram) - not sure about this.use Image::ExifTool script for collecting duplicate image data based on image-creation date, and camera model (maybe other exif data too).delete images only at the end of workflow.Can use FreeBSD/Linux utilities directly on the server and over the network can use OS X (but working with 600GB over the LAN not the fastest way). I'm able make complex scripts is BASH and "+-" :) know perl. is here already any algorithm available in a unix command form or perl module (XS?) what i can use to detect these special "duplicates"?."enchanced" versions of the originals (from some photo manipulation programs).how to find "similar" images, what are only the.What perl module can extract the "pure" image data from an JPG file what is usable for comparison/checksuming?.This is (i hope) not very complicated - but need some direction. (therefore file checksuming doesn't works, but image checksuming could.). how to find duplicates withg checksuming only the "pure image bytes" in a JPG without exif/IPTC and like meta informations? So, want filter out the photo-duplicates, what are different only with exif tags, but the image is the same.or they are the "enhanced" versions of originals, etc.or they are only a resized versions of the original image.photos what are different only with exif/iptc data added by some photo management software, but the image is the same (or at least "looks as same" and have the same dimensions).This helped a lot, but here are still MANY MANY duplicates: collected duplicated images (same size + same md5 = duplicate).

searched the the tree for the same size files (fast) and make md5 checksum for those.Photos comes from family computers, from several partial backups to different external USB HDDs, reconstructed images from disk disasters, from different photo manipulation softwares (iPhoto, Picassa, HP and many others :( ) in several deep subdirectories - shortly = TERRIBLE MESS with many duplicates. Having approximately 600GB of photos collected over 13 years - now stored on freebsd zfs/server.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed